The ATS/ERS standard for spirometry recommends reporting the highest FEV1 and the highest FVC even when they come from different tests. Our lab software allows us to do this, but only with some annoying limitations. One of the bigger limitations has to do with how expiratory time is reported. In particular, expiratory time is lumped in with a number of other values like Peak Flow (PEF) and FEF25-75. As importantly, the flow-volume loop and volume-time curve can only come from a single effort.

Our lab software defaults to choosing a single effort with the highest combined FVC+FEV1. The technician performing the tests will override this when other spirometry efforts have a larger FVC or a better FEV1 (which is chosen not just if it is higher but also on the basis of peak flow, back-extrapolation and other quality indicators). The usual order for this is to first choose a spirometry effort with the “best” FEV1, then if there is a different effort with a larger FVC that FVC is selected for reporting. When things are done this way what happens is that the expiratory time, flow-volume loop and volume-time curve that come from the effort selected for its FEV1 are reported. This means is that the expiratory time and volume-time curve often don’t match the reported FVC.

I always take a look at the raw test data whenever a spirometry report comes across my desk with an expiratory time less than 6 seconds or the technician noted that the spirometry effort is a composite. What I often find is that even though the reported expiratory time may be low, the FVC actually comes from an effort with an adequate expiratory time. Although I can select the right expiratory time the problem is that doing so also selects the PEF and the PEF from the effort with the highest FVC is often significantly less than the effort from the best FEV1. The same problem applies to selecting the volume-time curve since the associated flow-volume loop often doesn’t match the effort with the best FEV1 and best PEF. For these reasons I only select the correct expiratory time and volume-time curve when it doesn’t really affect the flow-volume loop and PEF.

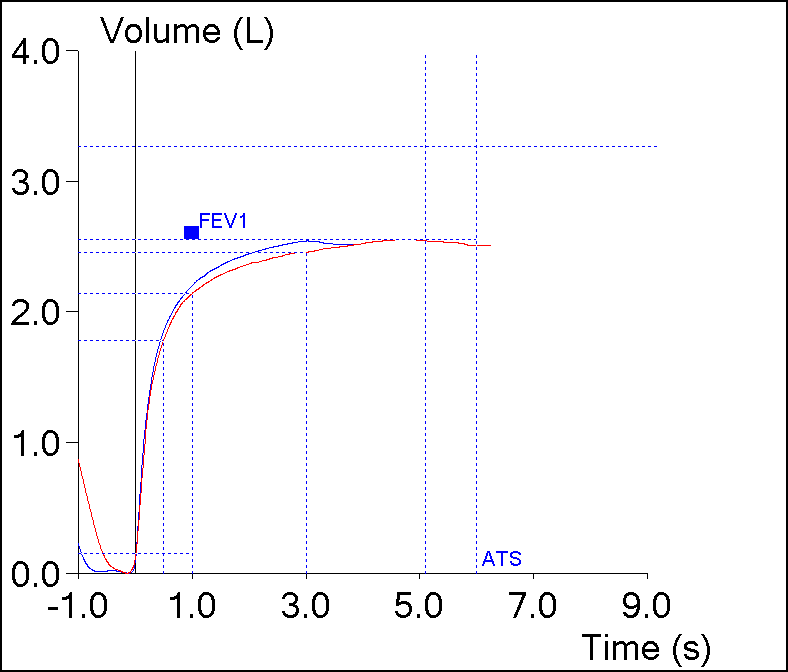

However, I’ve always assumed that the expiratory time from the effort with the highest FVC was probably the most correct expiratory time. Yesterday however, this spirometry effort came across my desk:

| Blue | Red | |

| FVC: | 2.53 | 2.54 |

| FEV1: | 2.19 | 2.13 |

| FEV1/FVC: | 86 | 84 |

| PEF: | 6.94 | 5.07 |

| Exp. Time: | 3.05 | 5.09 |