Recently my lab has had some turnover with a couple of older staff leaving and new staff coming on board. While reviewing reports I’ve found a number of instances where the incorrect FVC and FEV1 were reported. Taking these as “teachable moments” I’ve been annoying the staff with emails whenever I find something notably wrong. I had thought that our rules for selecting the best FVC and FEV1 were fairly straightforward but given the number of corrections I’ve made lately it seemed like it would be a good idea to revisit our policy on this subject.

The process I’ve used for selecting the best FVC and FEV1 has evolved over the years. Initially I was told to select the single spirometry effort that had the largest combined FVC and FEV1. Later on test quality became a factor (not that is wasn’t in the beginning but there aren’t a lot of quality indicators for a pen trace on kymograph paper). How to juggle the different quality rules wasn’t altogether clear however (they seemed to change a bit with whichever physician was reviewing PFTs at the time), and I was still supposed to somehow select just a single spirometry effort.

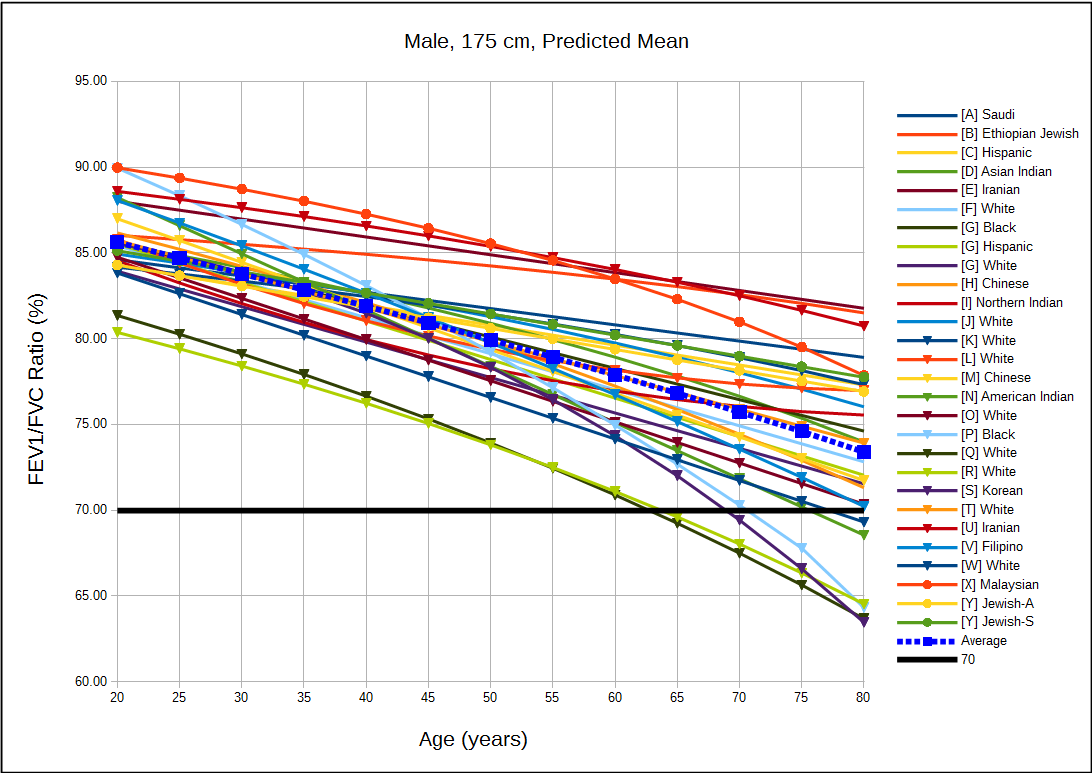

Most recently this was simplified by only having to select the largest FVC (regardless of test quality) from any spirometry effort and then the largest FEV1 as long as it came from a spirometry effort with good quality. This is pretty much in accord with the ATS/ERS spirometry standards but with one important difference, and that is that we use use Peak Expiratory Flow (PEF) as an indicator of test quality.

Strictly speaking the ATS/ERS standards state that

“The largest FVC and the largest FEV1 (BTPS) should be recorded after examining the data from all of the usable curves, even if they do not come from the same curve.”

There are, of course, a number of quality indicators for spirometry efforts that are used to indicate whether a curve is “usable”. These include things like back-extrapolation, expiratory time, terminal expiratory flow rate and repeatability but the one thing they do not include is PEF.

Despite not being within the ATS/ERS standards the reason that we use PEF in the selection process is found in the phrase “maximal forced effort” that is part of the ATS/ERS definition for FVC and FEV1. It has long been recognized (certainly since the early 1980’s and most likely earlier) that the FVC and FEV1 from a submaximal spirometry effort were often higher than the FVC and FEV1 from a maximal effort. So, is the largest FEV1 correct (as long as it meets the basic ATS/ERS criteria) or should it be the FEV1 from the effort with the highest PEF?

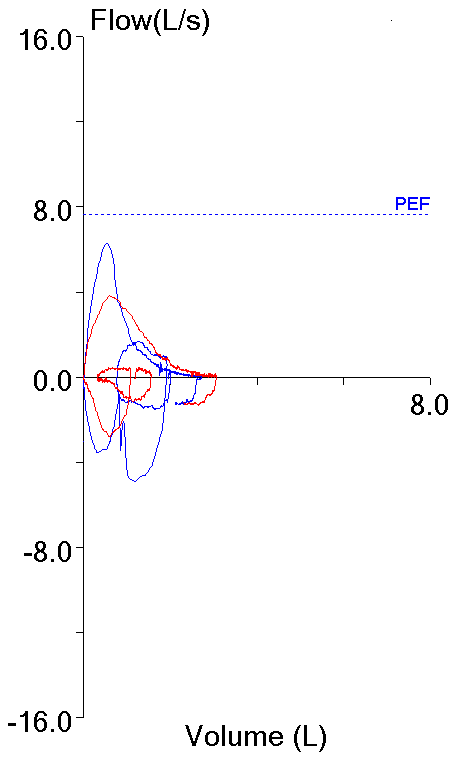

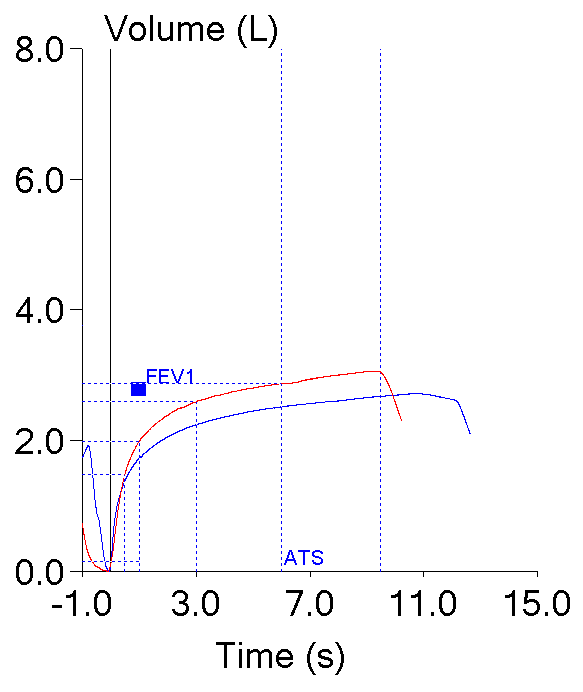

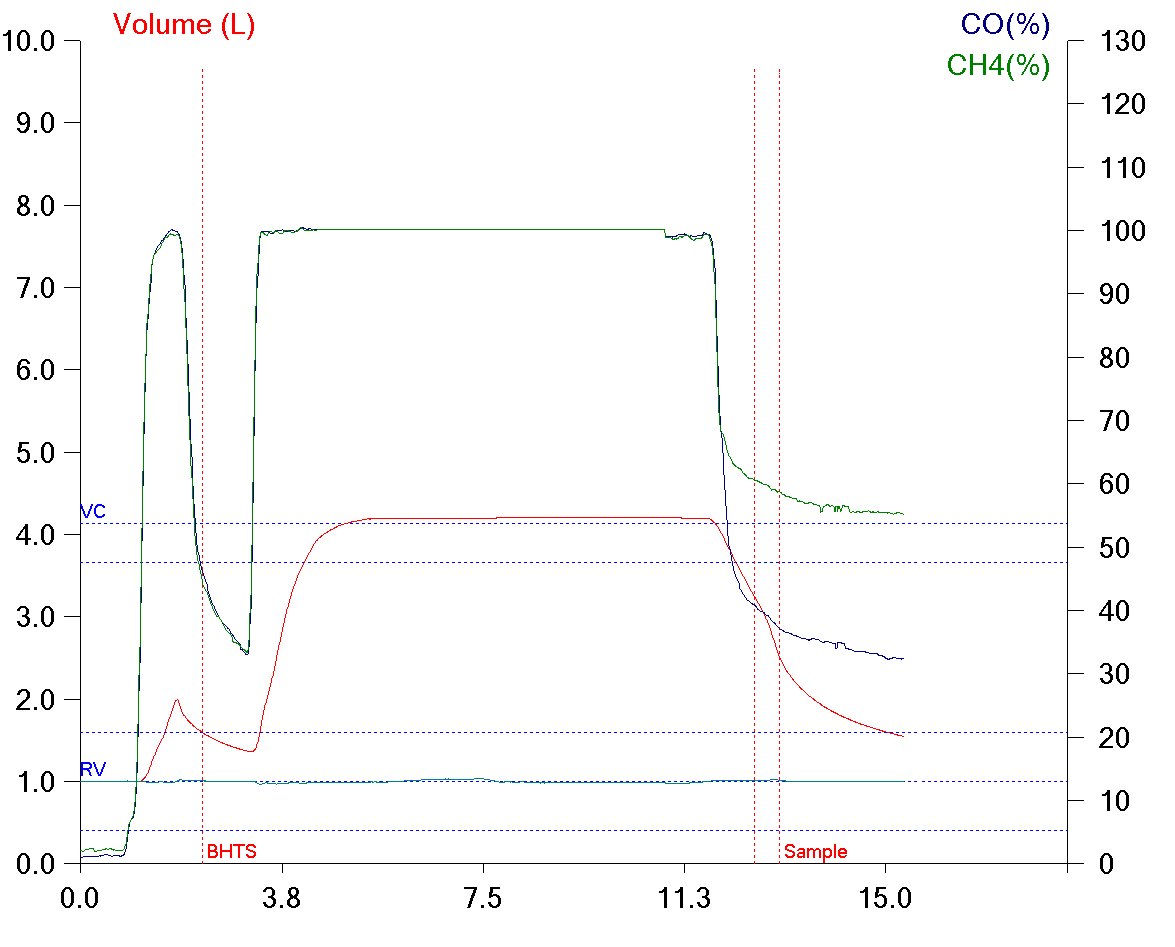

These two efforts from the same patient testing session highlight this dilemma. Both meet the ATS/ERS criteria for the start of the test which is what primarily applies to FEV1 (and PEF).

| Blue: | Red: | |

| FVC (L): | 2.72 | 3.06 |

| FEV1 (L): | 1.73 | 1.99 |

| PEF (L/sec): | 6.28 | 3.82 |